Surprise

Researchers from Waseda University have teamed up with Kyushu-based robot manufacturer tmsuk to develop a humanoid robot that uses its entire body to express a variety of emotions. (Watch video.)

Named "KOBIAN," the android integrates features of two previously developed robots -- the WABIAN-2 bipedal humanoid and the WE-4RII emotion expression humanoid -- into a bipedal machine that can walk around, perceive its environment, perform physical tasks, and express a range of emotions. The robot also features a new double-jointed neck that helps it achieve more expressive postures.

Delight

KOBIAN can express seven different feelings, including delight, surprise, sadness and dislike. In addition to assuming different poses to match the mood, the emotional humanoid uses motors in its face to move its lips, eyelids and eyebrows into various positions. To express delight, for example, the robot lifts its soft rubbery hands over its head and opens its eyes and mouth wide.

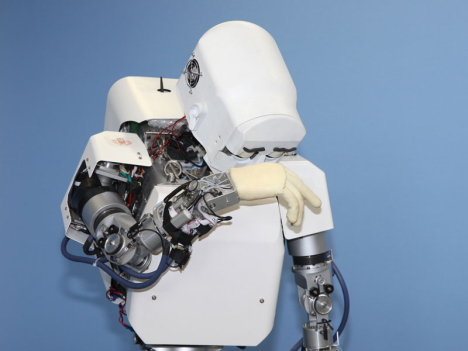

Sadness

To show sadness, the robot slouches over, hangs its head down and holds a hand up to its face in a gesture of grief.

Aversion

According to KOBIAN's developers, the robot's expressiveness makes it better equipped to interact with humans and assist with daily activities. In the future, the robot may seek work in the field of nursing.

[Source: Nikkei Net // Photos, video: Robot Watch]

On October 9, professors

On October 9, professors  On June 21, researchers at Waseda University's Institute of Egyptology unveiled the computer-generated facial image of an ancient Egyptian military commander that lived about 3,800 years ago. The image is based on CAT scans taken of a mummy.

On June 21, researchers at Waseda University's Institute of Egyptology unveiled the computer-generated facial image of an ancient Egyptian military commander that lived about 3,800 years ago. The image is based on CAT scans taken of a mummy.